6 January 2025

Can AI Detect Violence in Crowds Before It Happens?

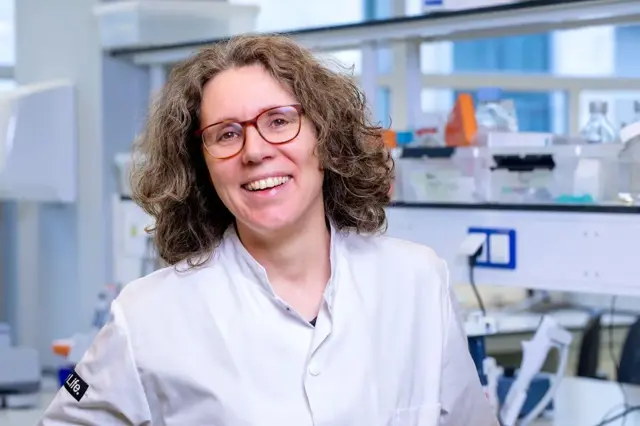

Veltmeijer has explored how AI can automatically analyze large groups of people, which could be valuable for security personnel and crowd managers looking to identify violence or disturbances in crowded areas.

AI for Security in Large Crowds

Veltmeijer focused on developing systems that could be used in practice to improve safety. "I automatically divided crowds into smaller subgroups," says Veltmeijer. "These subgroups can then be analyzed using images, videos, audio, and social media posts." By applying AI models to real-world data, she discovered how to detect fighting groups in video footage and use social media posts to track locations of riots in Amsterdam. She also looked at how crowd noise could help determine whether the atmosphere is positive or negative. These technologies could help security personnel identify violence more quickly or analyze social media posts to detect unexpected events like riots at sports events or demonstrations.

The Ethical Aspect of AI in Crowd Management

While the benefits of AI for security in crowded environments are clear, the application of such technologies raises ethical questions. "It’s important to carefully consider whether collecting detailed information about people in large crowds is desirable," says Veltmeijer. "Collecting data without violating privacy should always be a top priority." Veltmeijer also examined the impact of the European Artificial Intelligence (AI) Act on automated crowd analysis, as presented in her study. She emphasizes the importance of following ethical guidelines when developing AI systems, especially when collecting data from people in public spaces. "AI is playing an increasingly significant role in society, and it is crucial that we use the technology responsibly," says Veltmeijer.

Responsible Use of AI

After her ethical analysis, it became clear that there are still practical and ethical concerns, even with the implementation of the AI Act. Veltmeijer suggests improvements such as collecting data without personal information, considering the context in which data is collected, and taking individual responsibility as a researcher. The results of her research are important not only for those working in crowd management or security but also for anyone who has ever been part of a large crowd. "It’s essential to know your rights and understand how AI systems can contribute to your physical safety without compromising your privacy," Veltmeijer concludes.

Published by de Vrije Universiteit van Amsterdam.

Vergelijkbaar >

Similar news items

September 9

Multilingual organizations risk inconsistent AI responses

read more >

September 9

Making immunotherapy more effective with AI

read more >

September 9

ERC Starting Grant for research on AI’s impact on labor markets and the welfare state

read more >